Implementing deep learning algorithms from scratch using Python and NumPY is a good way to get an understanding of the basic concepts, and to understand what these deep learning algorithms are really doing by unfolding the deep learning black box.

However, as you start to implement very large or more complex models, such as convolutional neural networks (CNN) or recurring neural networks (RNN), it is increasingly not practical, at least for most of the people like me, is not practical to implement everything yourself from scratch.

Even though you understand how to do matrix multiplication and you are able to implement it in your code. But as you build very large applications, you'll probably not want to implement your own matrix multiplication function but instead, you want to call a numerical linear algebra library that could do it more efficiently for you. Isn't it?

The efficiency of your algorithm will help you fail fast 😃 and thus will help you to complete your iteration throughout the IDEA -> EXPERIMENT -> CODE cycle much more quickly.

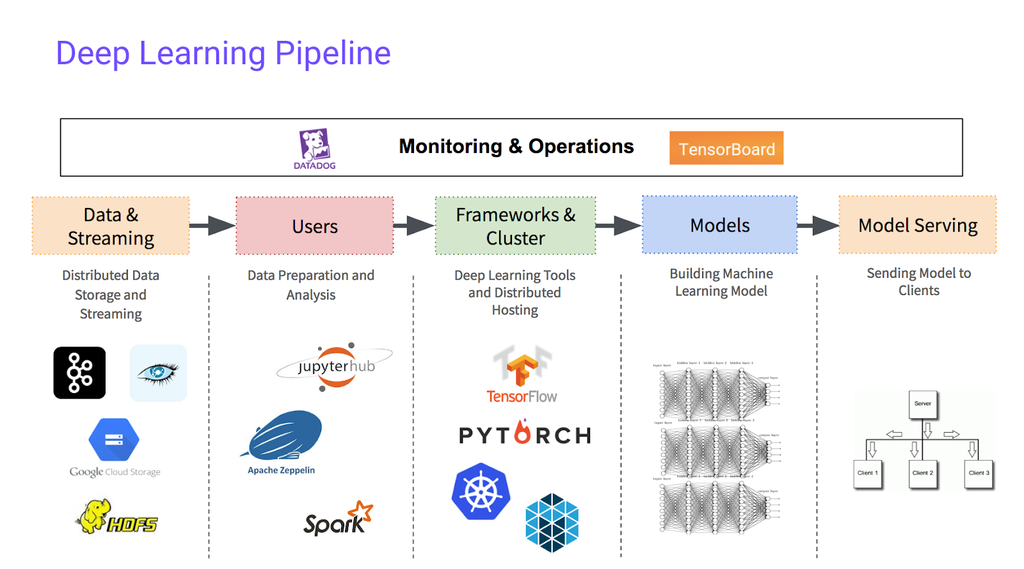

I think this is crucially important when you are in the middle of Deep Learning pipeline.

So let's take a look at the frameworks out there…

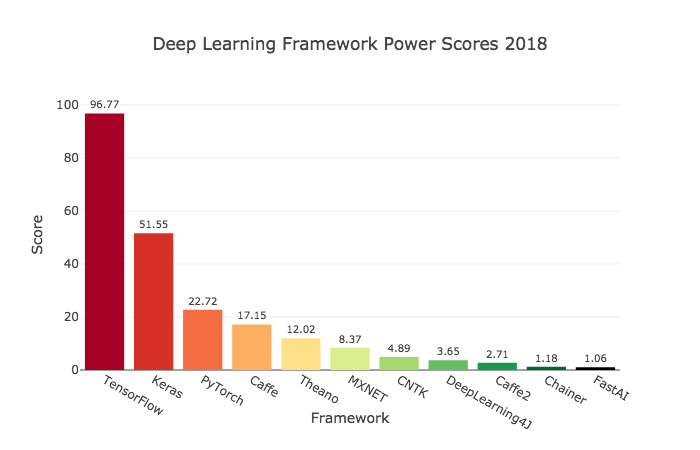

Today, there are many deep learning frameworks that make it easy for you to implement neural networks, and here are some of the leading ones.

Each of these frameworks has a dedicated user and developer community and I think each of these frameworks is a credible choice for some subset of applications. However, when I see the below graph my obvious choice goes for TensorFlow.

Well, I just said that I would choose TensorFlow. But, is only the above popularity scores matter while choosing a framework for your deep learning project? Turns out not…

I think many of these frameworks are evolving and getting better very rapidly. If the framework scores top in popularity in 2018 then by the end of 2019 it may not hold the same position.

There are a lot of people writing articles comparing these deep learning frameworks and how well these deep learning frameworks changes. And because these frameworks are often evolving and getting better month to month, I'll leave you to do a few internet searches yourself, if you want to see the arguments on the pros and cons of some of these frameworks.

So, how can you make a decision about which framework to use?

Rather than strongly endorsing any of these frameworks, I would like to share three factors that Stanford Professor Andrew Ng considers important enough to influence your decision.

1) Ease of programming

This includes developing, iterating, and finally, deploying your neural network to production where it may be used by millions of users.

2) Running Speeds

Training on large data sets can take a lot of time, and differences in training speed between frameworks can make your workflow a lot more time efficient.

3) Openness

This last criterion is not often discussed, but Andrew Ng believes it is also very important. A truly open framework must be open source, of course, but must also be governed well.

So it is important to use a framework from the company that you can trust. As the number of people starts to use the software, the company should not gradually close off what was open source, or perhaps move the functionality into their own proprietary cloud services.

But at least in the short term depending on your preferences of language, whether you prefer Python or Java or C++ or something else, and depending on what application you're working on, whether this can be division or natural language processing or online advertising or something else, I think multiple of these frameworks could be a good choice.

So that was just a higher level abstraction of deep learnig programming framework. Any of these frameworks can make you more efficient as you develop machine learning applications.

In a subsequent post, we'll take a step from zero → one to learn TensorFlow.

That's it for this post, my name is Vivek from Goalist. See you soon with one of such next time; until then, Happy Learning :)