Hello World! My name is Vivek Amilkanthawar

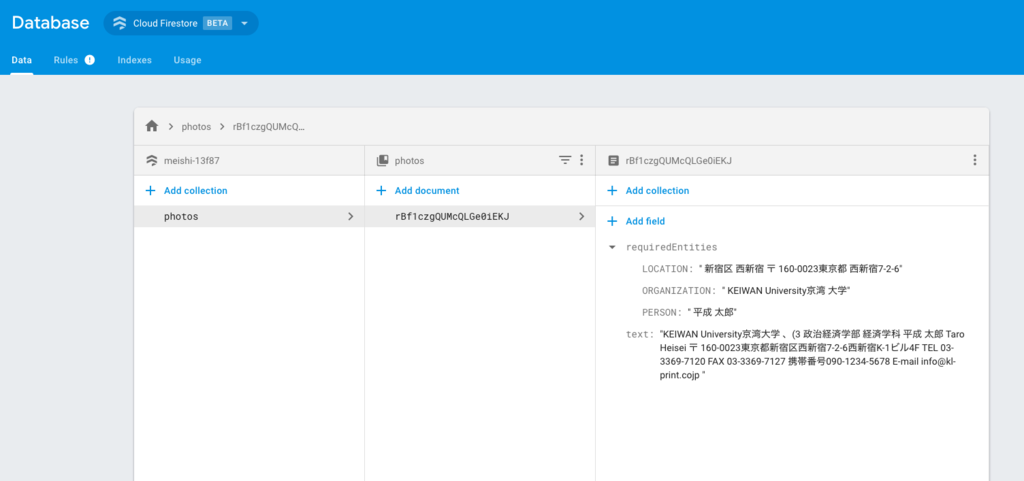

In the last blog post, we had written cloud function for our Business Card Reader app to do the heavy lifting of text recognition and storing the result into database by using Firebase, Google Cloud Vision API and Google Natural Language API.

We had broken down the entire process into the following steps.

1) User uploads an image to Firebase storage via @angular/fire in Ionic.

2) The upload triggers a storage cloud function.

3) The cloud function sends the image to the Cloud Vision API

4) Result of image analysis is then sent to Cloud Language API and the final results are saved in Firestore.

5) The final result is then updated in realtime in the Ionic UI.

Out of these, step #2, step #3 and step #4 are explained in last blog post

If you have missed the last post, you can find it here...

In this blog post, we'll be working on the frontend to create an Ionic app for iOS and Android (step #1 and step #5)

The final app will look something like on iOS platform

So let's get started

Step 1: Create and initialize an Ionic project

Let’s generate a new Ionic app using the blank template. I have named my app as meishi (めいし) it means 'business card' in Japanese.

ionic start meishi blank cd meishi

Making sure you are in the Ionic root director then generate a new page with the following command

ionic g page vision

We'll use the VisionPage as our Ionic root page inside the app.component.ts

import { VisionPage } from '../pages/vision/vision'; @Component({ templateUrl: 'app.html' }) export class MyApp { rootPage:any = VisionPage; // ...skipped }

Add @angular/fire and firebase dependencies to our Ionic project for communicating with firebase.

npm install @angular/fire firebase --save

Add @ionic-native/camera to use native camera to capture buisness card image for processing.

ionic cordova plugin add cordova-plugin-camera npm install --save @ionic-native/camera

At this point, let's register AngularFire and the native camera plugin in the app.module.ts

(add your own Firebase project credentials in firebaseConfig)

import {BrowserModule} from '@angular/platform-browser'; import {ErrorHandler, NgModule} from '@angular/core'; import {IonicApp, IonicErrorHandler, IonicModule} from 'ionic-angular'; import {SplashScreen} from '@ionic-native/splash-screen'; import {StatusBar} from '@ionic-native/status-bar'; import {MyApp} from './app.component'; import {HomePage} from '../pages/home/home'; import {VisionPage} from '../pages/vision/vision'; import {AngularFireModule} from '@angular/fire'; import {AngularFirestoreModule} from '@angular/fire/firestore'; import {AngularFireStorageModule} from '@angular/fire/storage'; import {Camera} from '@ionic-native/camera'; const firebaseConfig = { apiKey: 'xxxxxx', authDomain: 'xxxxxx.firebaseapp.com', databaseURL: 'https://xxxxxx.firebaseio.com', projectId: 'xxxxxx', storageBucket: 'xxxx.appspot.com', messagingSenderId: 'xxxxxx', }; @NgModule({ declarations: [ MyApp, HomePage, VisionPage, ], imports: [ BrowserModule, IonicModule.forRoot(MyApp), AngularFireModule.initializeApp(firebaseConfig), AngularFirestoreModule, AngularFireStorageModule, ], bootstrap: [IonicApp], entryComponents: [ MyApp, HomePage, VisionPage, ], providers: [ StatusBar, SplashScreen, {provide: ErrorHandler, useClass: IonicErrorHandler}, Camera, ], }) export class AppModule { }

Step 2: Let's make it work

There is so much going on in the VisionPage component, let's break it down and see it step by step.

1) User clicks "Capture Image" button which triggerscaptureAndUpload()to bring up the device camera.

2) Camera returns the image as a Base64 string. I have reduced the quality of the image in order to reduce processing time. For me, even with 50% of the image quality, Google Vision API is doing well.

3) We generate an ID that is used for both the image filename and the Firestore document ID.

4) We then listen to this location in Firestore.

5) An upload task is created to transfer the file to storage.

6) We wait for the cloud function (refer my last post) to update Firestore.

7) Once the data is received from Firestore we use helper methods extractEmail() and extractContact() to extract email and contact information from the received string.

8) And it's done!!

import {Component} from '@angular/core'; import {IonicPage, Loading, LoadingController} from 'ionic-angular'; import {Observable} from 'rxjs/Observable'; import {filter, tap} from 'rxjs/operators'; import {AngularFireStorage, AngularFireUploadTask} from 'angularfire2/storage'; import {AngularFirestore} from 'angularfire2/firestore'; import {Camera, CameraOptions} from '@ionic-native/camera'; @IonicPage() @Component({ selector: 'page-vision', templateUrl: 'vision.html', }) export class VisionPage { // Upload task task: AngularFireUploadTask; // Firestore data result$: Observable<any>; loading: Loading; image: string; constructor( private storage: AngularFireStorage, private afs: AngularFirestore, private camera: Camera, private loadingCtrl: LoadingController) { this.loading = this.loadingCtrl.create({ content: 'Running AI vision analysis...', }); } startUpload(file: string) { // Show loader this.loading.present(); // const timestamp = new Date().getTime().toString(); const docId = this.afs.createId(); const path = `${docId}.jpg`; // Make a reference to the future location of the firestore document const photoRef = this.afs.collection('photos').doc(docId); // Firestore observable this.result$ = photoRef.valueChanges().pipe( filter(data => !!data), tap(_ => this.loading.dismiss()), ); // The main task this.image = 'data:image/jpg;base64,' + file; this.task = this.storage.ref(path).putString(this.image, 'data_url'); } // Gets the pic from the native camera then starts the upload async captureAndUpload() { const options: CameraOptions = { quality: 50, destinationType: this.camera.DestinationType.DATA_URL, encodingType: this.camera.EncodingType.JPEG, mediaType: this.camera.MediaType.PICTURE, sourceType: this.camera.PictureSourceType.PHOTOLIBRARY, }; const base64 = await this.camera.getPicture(options); this.startUpload(base64); } extractEmail(str: string) { const emailRegex = /(([^<>()\[\]\\.,;:\s@"]+(\.[^<>()\[\]\\.,;:\s@"]+)*)|(".+"))@((\[[0-9]{1,3}\.[0-9]{1,3}\.[0-9]{1,3}\.[0-9]{1,3}])|(([a-zA-Z\-0-9]+\.)+[a-zA-Z]{2,}))/; const {matches, cleanedText} = this.removeByRegex(str, emailRegex); return matches; }; extractContact(str: string) { const contactRegex = /(?:(\+?\d{1,3}) )?(?:([\(]?\d+[\)]?)[ -])?(\d{1,5}[\- ]?\d{1,5})/; const {matches, cleanedText} = this.removeByRegex(str, contactRegex); return matches; } removeByRegex(str, regex) { const matches = []; const cleanedText = str.split('\n').filter(line => { const hits = line.match(regex); if (hits != null) { matches.push(hits[0]); return false; } return true; }).join('\n'); return {matches, cleanedText}; }; }

Step 3: Display your result

Let's create a basic UI using ionic components

<!-- Generated template for the VisionPage page. See http://ionicframework.com/docs/components/#navigation for more info on Ionic pages and navigation. --> <ion-header> <ion-navbar> <ion-title>Meishi</ion-title> </ion-navbar> </ion-header> <ion-content padding> <ion-row> <ion-col col-12 text-center> <button ion-button icon-start (tap)="captureAndUpload()"> <ion-icon name="camera"></ion-icon> Capture Image </button> </ion-col> <ion-col col-12> <img width="100%" height="auto" [src]="image"> </ion-col> <ion-col *ngIf="result$ | async as result"> <h4> <span class="title">名前: </span><br> {{result.requiredEntities.PERSON}} </h4> <h4> <span class="title">Email:</span><br> <span *ngFor="let email of extractEmail(result.text)">{{email}}<br></span> </h4> <h4> <span class="title">電話番号:</span><br> <span *ngFor="let phone of extractContact(result.text)">{{phone}}<br></span> </h4> <h4> <span class="title">組織:</span><br> {{result.requiredEntities.ORGANIZATION}} </h4> <h4> <span class="title">住所:</span><br> {{result.requiredEntities.LOCATION}} </h4> <h4><span class="title">認識されたテキスト</span></h4> <h5> {{result.text}} </h5> </ion-col> </ion-row> </ion-content>

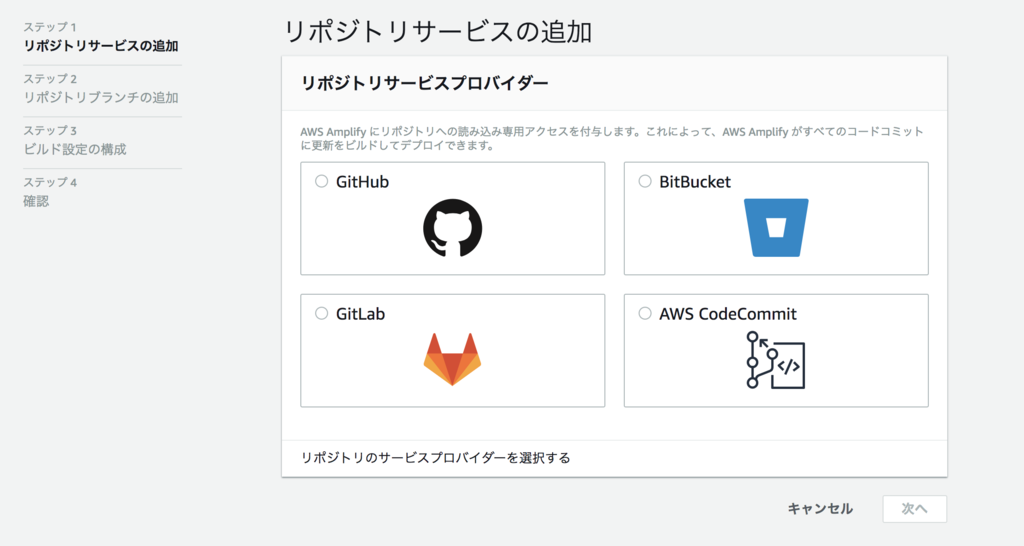

Step 4: Generate an app into platform of your choice

Finally, let's generate an application into iOS or Android

Run the following command to create a build of the app for iOS

ionic cordova build ios

In a similar way to generate android app, run the following command

ionic cordova build an Android

Open the app on an emulator or on an actual device and test it yourself

Congrats!! we just create a Business Card Reader app powered with Machine Learning :)

That's it for this post see you soon with one of such next time; until then,

Happy Learning :)

Verbal

Verbal

Non-Verbal

Non-Verbal

Written

Written